I am just thinking, wouldn’t it be easier and more productive for everybody if we make a public Shopping API gateway?

So basically, everybody that has an API key would output the sopping data to a API data hub that stores the data and then makes it available to everybody. This way the data will be more up to date (I don’t think that everybody, or anybody, has a dedicated machine to pull data from).

I know that there is an issue with how the data is used, and the devs said that this api is not intended to give a single user an advantage, but have it as a tool for ppl that want to create something for the community, so I guess the API hub would not be public, and it would only be available for ppl that actually have an API key (so they supply data to the hub in order to get data from it).

I am thinking that this HUB can run as a master/slave one, and it can have a functionality like request resource information. By keeping track of what data it was feed, it can also request data for items that are old.

Also with a socket/version implementation we can have a pull system, where you don’t have to ask the server all the time for new data, just connect to it and it will notify you when a new version of prices is available so you can download into your own database.

I was thinking about this as I have one or 2 ideeas about some 3rd party software that I might want to make to help the comunity, but I won’t have a way to keep the data fresh, pulling data from the game, so in case there are ppl with the same issue, this would be a nice solution for it.

If there are ppl out there that would use a system like this I would be open to implement it. I have a free AWS account where I could host, and a micro server (free) would be enough to support the traffic.

1 Like

My knowledge in coding is really nothing compared with the amazing people on this comunity.

As far as I did understand there is a limitation in how often a request can be made rigth?

So I was thinking if it was possible to have a single server request the data and then post it for everyone to look at it?

Is this kind of what you are saying? Again I just have a basic knowledge of all this kind of stuff lol

A single server that would do the requests would mean that ppl would have to share the API key with that server, and I don’t think this is ok with everybody, what I propose is a bit diffrent.

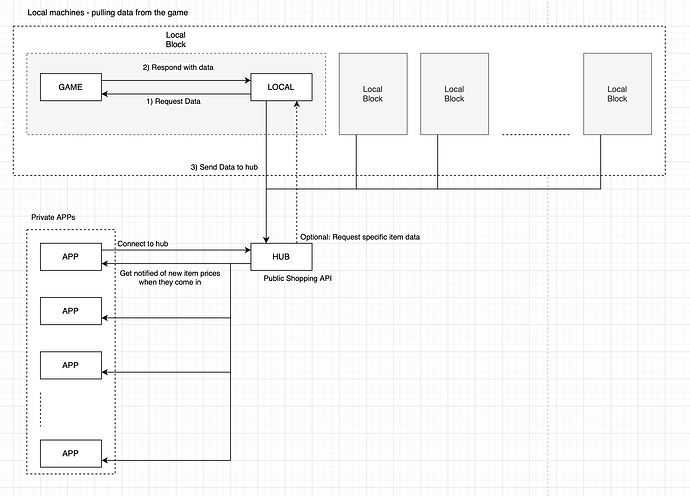

If we break the components of the system, it would look something like this:

- Game

The game runnig locally on a machine.

- Local

Local server/softwear that pulls data from the Game.

- Hub

Online server that manages the data from the Game.

- App

Online apps (usually owned by ppl that have the API key) that pulls the data directly from the HUB

The steps would be somethign like:

Also as you said, doing it like this, having a master (the Hub) to instruct local softwear what data to pull, means you will get around the limitation and not tax the servers. It would be the most eficient wayt to get the shopping data and keep it fresh.

1 Like

Oh! this Is what I was thinking but I clearly lack of the knoledge to explain it correctly.

I do really hope the comunity can work with something like this!

Thanks for taking the time to explain it like that

1 Like

The devs are implementing this currently. Hopefully it’ll be out soon!

They are? :o I’d like to know what going on with the API if that’s the case

On the actual topic: This would be great if allowed by the devs, the only problem is that the data consumers (apps) wouldn’t have any control over the scanning order so prioritising items wouldn’t be possible. But that’s not a huge loss. My system already kind of works in this fashion, I just don’t use sockets and push data to them.

Are they implementing a HUB where we can dump our data and get it merged, or an actual API that is not tied to the game that is online and actually up to date and dose not have a lot of limitations (at least that it will allow to update the prices once 10 min or so)?

If the ladder, than awesome. It would be amazing to have. I will speed up dev time on my projects then, to be ready when it hits

When they last discussed it, it’s an API people can pull from. It is per-item though which limits refresh speed

So we are not talking about the Shopping API that you can actually implement now by requesting an API key, right?

I think he is talking about what it currently is, I haven’t heard anything about a big change to a centralized design. The only thing I’ve heard is that there’s going to be some kind of dynamic delay system which increases the delay a single user can request data at if there are multiple users hitting the server at the same time.

4 Likes

I didn’t think this would be a thing that would be needed, but of course it can be implemnted in the system. That is presuming that the HUB can comunicate with each scanner (local app) and tell them what to scan first. The priority on the hub can be set by a combination of age (items that have not been updated for some x time) versus freshness request from the APPs.

So an app would have a set of settings that it can set, prioritizing the need of item, so for ex if 3 apps want to see the latest data about Rough Oort, then that specific item will be moved to the top of the queue and requested as soon as possible.

Also, I think that if we have at least 20 API keys in the HUB, we would have the data extreamly fresh, as a refresh rate of once every 1 minute. But of course, this can be made so the heigh priority items get a bigger refresh rate, and if they are 10-20 of them, you could have them update every second. I don’t think it could get any better than this with the current api.

Of course this presumes that there are Local Apps that run 24h. But I don’t think that would be the case, especially that ammount (20). I usually have it on for 2-6h/day.

The refresh rate of 1/second isn’t tied to the key, it’s a global delay so no matter how many api keys you have it’ll be the same speed.

In what sense? Global as for their own server? So if there are 10ppl sending a request at the same time (for the same world/item), the first will get the response instant, the second 1s later, the 3rd 2 sec later and so on? Or is it per client?

Yeah I’m pretty sure it queues requests more or less like this. Mayu knows all though

The server won’t respond to requests for 1 second after being queried, while the server is on “cooldown” if someone requests a new resource that’s not in the CDN cache, I think it’ll give a 403 error. But that hasn’t been tested yet.

So my ideea is outside the scope then. As there won’t be any chance of getting data fresher than 20 min for all items, that is presuming that you make the requests secuntially for all items (and not you, but all the clients that are requesting data from the server). But this is counterintuitive for me, if the data is only updated when you actually make a request to the server, if you don’t get the luck to be the one requesting a new item, you would keep getting old data about the item untill you are able to make a request while not in cooldown to get it up to date. It seems that this would make the valability of the data a bit random and unreliable.

So basically, my ideea dose not produce any beneffits for anybody.

I think the delay will try to fix that problem and queue users up, but I have no idea how it’ll be implemented. Oh and it’ll be 40-60 minutes for a full scan, buy / sell orders are a separate request, add ping on top of that to another continent and it’ll be slow.

If modified a bit it does, building a system that synchronously scans items on 50 different servers and aggregates the results to somewhere that can be later on queried isn’t too simple. If some other entity was going to do all the aggregating and queue management and stuff like that it’d be useful for smaller tools, which just need some simple price data so they don’t have to implement and run a scanning thing 24/7.

As I said my system (BUTT) already works a bit like your hub, I run a separate “scanner” for each planet that scans the items and sends that data to a database. My websites then query that database server through a rudimentary API layer which gets the data from the database and serves it.

1 Like